One of our internal production is running an OpenShift 3.7 cluster. As OKD 3.10 is rolling out (and renamed from OpenShift Origin), 3.7 is far too old and missing some useful new features.

"Let's get it upgraded!" I shouted out, and nightmares started too to pour out.

Day 1: Let's Upgrade, Ansibly

OKD is managed heavily dependent on Ansible scripts. By the documents, upgrading OpenShift could be done in either in-place way or blue-green way. In the latter process, control planes, or masters in our cluster, are upgraded first to the next release, then a number of nodes with blue color label is added into the cluster. Then the pods are evacuate to blue nodes, and old nodes are deleted from the cluster.

Blue-green upgrade should be a safer way. Since it is the first time I upgrade OpenShift cluster, I would like to run the ansible scripts to upgrade masters first. Since the upgrade process could not skip a release, running the script would only bump version to the next release. There was, of course, an exception which is from 3.7 to 3.9 because 3.8 hasn't been officially released. Running upgrade on OpenShift 3.7 will let the script upgrade from 3.7 to 3.8, then again from 3.8 to 3.9 automatically.

Unfortunatly, the upgrade is incredibly slow, and failed at TASK [Upgrade all storage] for the first time. OpenShift Web Console and all the pods are still working, however. So I just retried running the ansible script before bed.

And that was the first day.

Day 2: Versions in Chaos

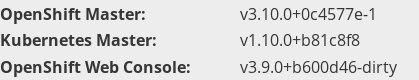

When I returned to the terminal, everyting looks fine - and still the same for me. Everything is working and familiar until I checked the version.

Masters and nodes are running the different version, as expected. But OpenShift masters are upgraded to 3.10 instead of expected 3.9.

It was a mess! I quickly confirmed that openshift-ansible repository is on release-3.9 branch and openshift_version is set to 3.9. I ran the ansible playbook to upgrade nodes, but since the masters are running 3.10, nodes are not able to be upgraded to 3.9. Neither them to be upgraded to 3.10 because they are not running 3.10!

Day 4: Rebuild Masters and Node Config Maps

OpenShift 3.10 has a new openshift-node project and node configmaps to bootstrap nodes. Therefore, every node must specify a openshift_node_group_name property in inventory files. Somehow by magic, This cluster with OKD 3.10 masters is runnning without it! I had to fix it first by running

$ ansible-playbook -i <inventory_file> openshift_node_group.yml

and then I could perform most other operations.

Day 5: Scale-up and Replace

To fix the version inconsistency between nodes and masters, I came out with an new idea to scale-up the cluster and knock out old nodes. While pods could be evacuated to other nodes, this method should just work like blue-green upgrade. And it worked. For at least a while.

Day 8: Certificates and LDAP

Our LDAP server is fored to be accessed under TLS, and the server certificate is signed by our own CA. So we need to download the CA certificate, and specify oauthConfig:identityProviders:provider:ca to the file. After adding the line and restart master API, logging is working.

But there is still a thing strange: we haven't done this before. How did it work? From OKD 3.10, masters is running containerized. Thus, system CA roots could not be used as default in the container. We have to specify one if we need a CA certificate.

Day 9: Logs and Terminal Outage

Logs and Terminal is was still not available. I googled and checked for masters' log and found a huge amount of x509: certificate signed by unknown authority error. I redepolyed the certificate using:

$ ansible-playbook -i <inventory_file> /usr/share/ansible/openshift-ansible/playbooks/byo/openshift-cluster/redeploy-certificates.yml

However, this caused etcd failed to start. By checking the nodes, I surprisingly found that the playbook wrongly set the permission of /etc/etcd directory and its certificates. I corrected the permission and restarted etcd, and things went fine then.

# chmod +x /etc/etcd

# chmod 644 /etc/etcd/ca.crt /etc/etcd/peer.* /etc/etcd/server.*

After redeploying the certificate, OpenShift web console will constantly return 502. OpenShift Origin issue #20005 show a similar problem which caused by openshift-ansible not regenerating the certificate of web console. Simply delete the secret and running pods will solve the problem:

$ oc delete secret webconsole-serving-cert

$ oc delete pods webconsole=true

Day 10: Rebuild Registry, Router and Metrics

Most services seemed to be running, but the cluster could not schedule new pods with Image Pull Back-off error. I tried to build a image to test the functionality of the built-in docker registry and it failed pushing docker image. I checked the registry console but it worked as a charm and images were out there!

I decided to rebuild the whole default project. I deleted docker-registry deploymentconfig, and tried to follow Setting up the Registry chatper in the document with

$ oc adm registry --config=admin.kubeconfig --service-account=registry

but it still didn't work. I tried pulling image on a node and got HTTP response to a HTTPS client. I quickly realized that I need to follow the rest of the procedures to secure and expose the registry which I didn't before. Is there any automatical way?

Yes! There is actually a openshift-hosted part in openshift-ansible. We could utilize it to deploy the registry with console and router within 1-click. I deleted all of them, set up variables and ran:

$ ansible-playbook -i <inventory_file> playbooks/openshift-hosted/config.yml

$ ansible-playbook -i <inventory_file> playbooks/openshift-hosted/deploy-router.yml

In my deployment, there was a unsupported parameter IMAGE_NAME in roles/cockpit-ui/tasks/main.yml. It would be fine to find the line and delete it.

And I was happy to see that they are up and working.

Day 12: Logs and Terminal Outage, Again

Logs is down again without a known reason. By checking the logs on running nodes, I found the following error:

http: TLS handshake error from 10.128.4.69:46722: no serving certificate available for the kubelet

http: TLS handshake error from 10.128.4.69:46740: no serving certificate available for the kubelet

It's strange that the TLS handshake failure is between the user and node but not host and node.

Through googling, I found a bug on Red Hat BZ#1571515 related to it. It should be fixed in latest version of openshift-ansible by PR#9711.

To verify the bug, use

$ oc get csr

NAME AGE REQUESTOR CONDITION

csr-25tvg 8h system:node:infra0 Pending

csr-29mhr 12h system:node:infra0 Pending

csr-2cswz 17h system:node:master0 Pending

csr-2gj4h 22h system:node:infra1 Pending

csr-2h8sx 4h system:node:master1 Pending

csr-2hf4t 10h system:node:infra1 Pending

csr-2hzhc 8h system:node:master1 Pending

csr-2j4jq 11h system:node:infra0 Pending

csr-2jcnl 20h system:node:infra1 Pending

....

It would print all the CSRs where we could find all of them are in pending conditon. To make it work properly, we need to approve all the CSRs.

$ oc get csr | grep Pending | awk -F ' ' '{print $1}' | xargs oc adm certificate approve

Ending and Conclusion

After all this week, OpenShift is working fine though some services needs to be rebuilt. But what I want to say most is, it is extremely important to keep OpenShift cluster up-to-date with the latest major version, or just never touch it after a success deployment if the deployment type is origin. If you did and met problems, I hope this post would help a little.

Happy K8S and wish you a happy ops life!